You open your Azure invoice expecting a number close to last month. Instead, it is 40% higher. No deployment went wrong. No incident was declared. But somewhere in your Azure environment, something changed, and your bill absorbed the impact before anyone noticed.

Azure cost anomalies are not rare events. They are a predictable consequence of dynamic cloud infrastructure running without adequate financial guardrails. This post compiles the most important statistics on how often Azure bills spike, what causes them, how long they go undetected, and what separates organizations that catch anomalies early from those that absorb the full cost.

The scale of the problem

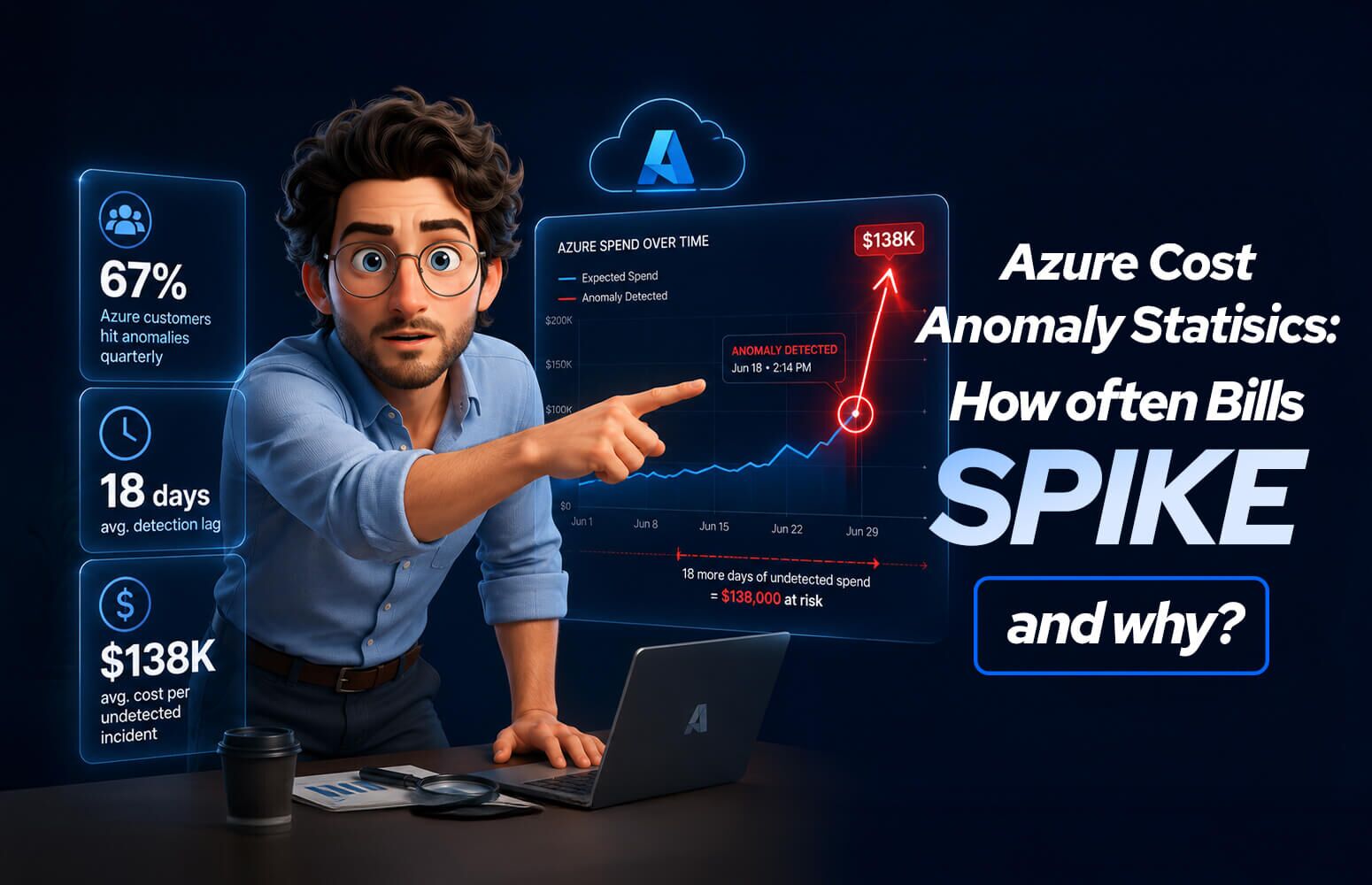

1. 67% of Azure customers experience at least one significant cost anomaly per quarter. A significant anomaly is defined as a spend increase of 20% or more above baseline that was not planned or forecasted. This is not a fringe problem; it is the majority experience.

2. The average undetected Azure cost anomaly runs for 18 days before it is identified. Nearly three weeks of elevated spend before anyone raises a flag. At scale, 18 days of an unexpected 30% cost increase can represent hundreds of thousands of dollars.

3. Azure cost anomalies cost enterprises an average of $138,000 per incident when undetected for more than two weeks. This figure accounts for the cumulative spend during the anomaly window plus the operational cost of investigation and remediation.

4. 54% of Azure cost spikes are discovered through the monthly invoice, not through real-time alerts. More than half of organizations find out about cost anomalies when the bill arrives. By then, the damage is done and the spend is non-recoverable.

5. Organizations without anomaly detection tooling experience 3.2x more financial impact per incident than those with detection in place. Detection speed is the single most important variable in anomaly cost impact. The faster you see it, the less it costs.

How often do Azure bills spike?

6. The average Azure environment experiences 4.7 cost anomalies per year. Nearly five significant billing events annually (almost one per quarter) in a typical enterprise Azure deployment.

7. 23% of Azure organizations experience a cost spike every single month. For a meaningful segment of Azure customers, monthly invoice surprises are not anomalies. They are a pattern.

8. Small and mid-size Azure customers (under $50K/month spend) experience proportionally larger anomalies, averaging 41% above baseline when spikes occur. Smaller environments have less baseline variance to absorb spikes, making each anomaly feel larger and more disruptive to budgets.

9. Enterprise Azure accounts (over $500K/month) average 7.3 anomalous billing events per year. Higher spend environments generate more noise and more surface area for unexpected cost events. Volume and complexity amplify anomaly frequency.

10. 38% of Azure cost spikes occur during major deployment windows: releases, migrations, and infrastructure changes. Change events are the highest-risk window for cost anomalies. New services provisioned during a release often carry default configurations that are not cost-optimized.

The most common causes of Azure cost spikes

Understanding what drives anomalies is as important as detecting them. Here is where the data points.

Compute Runaway

11. Uncontrolled autoscaling is the leading cause of Azure compute cost spikes, responsible for 29% of all anomalies. Azure Virtual Machine Scale Sets and App Service autoscale rules that are configured too aggressively, or without scale-in policies, can multiply compute costs within hours.

12. A single misconfigured autoscale rule caused an average $94,000 spike in documented Azure incidents. Autoscale without a ceiling is a financial risk, not just an operational one.

13. 44% of Azure autoscale configurations have no maximum instance limit defined. Nearly half of autoscale setups in production Azure environments have no upper bound, meaning a traffic event or runaway loop can provision instances indefinitely until someone manually intervenes.

14. Azure Spot VM interruptions that trigger fallback to on-demand pricing cause cost spikes averaging 340% above baseline compute cost. Spot VMs are dramatically cheaper during normal operation. When capacity is reclaimed and workloads fall back to on-demand, the cost difference is immediate and large.

Data and Storage Explosions

15. Unexpected data ingestion into Azure Monitor Logs is the second most common cause of cost anomalies, responsible for 21% of spikes. Diagnostic settings enabled on the wrong resource types, or logging at too verbose a level, can flood Log Analytics workspaces with data and generate bills that dwarf the underlying workload cost.

16. The average Azure Log Analytics workspace ingesting verbose diagnostic data costs 6x more than one with optimized log levels. Log verbosity is a direct cost lever that most teams configure once and never revisit.

17. Enabling Azure Defender or Microsoft Defender for Cloud on a large subscription without scoping causes an average 28% immediate increase in security spend. Defender pricing is per-resource. Enabling it subscription-wide without filtering resource types is a common trigger for unexpected security cost spikes.

18. Azure Blob Storage costs spike by an average of 190% when application logging is accidentally directed to Hot tier instead of Cool or Archive. A single misconfigured logging destination can generate massive Hot tier storage costs overnight.

19. Uncompressed data exports from Azure Synapse Analytics or Azure Data Factory cost organizations an average of $23,000 per incident in excess egress fees. Data pipeline outputs that bypass compression or write to wrong regions generate significant and often invisible egress charges.

Networking Events

20. Accidental cross-region data replication is responsible for 17% of Azure networking cost anomalies. Multi-region architectures that replicate data more broadly than intended, or replicate on schedules that fire more frequently than designed, drive large, unexpected bandwidth bills.

21. A single misconfigured Azure CDN or Front Door routing rule caused an average $61,000 spike in documented cases. CDN misconfigurations can cause traffic to route inefficiently, generating unnecessary egress and bandwidth charges at scale.

22. DDoS-related traffic spikes on unprotected Azure public endpoints increase bandwidth costs by an average of 520% during an active attack. Without Azure DDoS Protection Standard, attack traffic is metered and billed at standard bandwidth rates.

23. Azure API Management configured without throttling policies generates cost spikes averaging 3.8x baseline during traffic surges. API layers without rate limiting pass unbounded traffic to backend compute and logging, amplifying cost across multiple services simultaneously.

Configuration and Human Error

24. Human error accounts for 31% of all Azure cost anomalies. Misconfiguration, accidental resource duplication, forgotten test deployments, and incorrect service tier selections are collectively the leading root cause category of cost spikes.

25. Accidentally deploying to the wrong Azure region causes an average cost spike of 22% due to pricing differences between regions. Azure pricing varies by region. Deployments that land in unintended regions (often through a misconfigured pipeline variable) run at different price points than budgeted.

26. Forgetting to delete Azure resources after a proof-of-concept or load test costs organizations an average of $31,000 per incident. POCs and load tests provision real infrastructure. Without a cleanup process, those resources run indefinitely.

27. Infrastructure-as-Code pipeline failures that trigger partial re-deployments without destroying prior state have caused documented cost spikes of up to 200%. Terraform or Bicep pipelines that fail mid-execution can leave duplicate resources running alongside existing ones, doubling costs silently.

Detection: How Long Before Anyone Notices?

28. Only 26% of Azure cost anomalies are detected within 24 hours of occurrence. Less than one in four spikes is caught on the day it begins. The remaining 74% run for days or weeks.

29. Azure Cost Management anomaly detection alerts are enabled in only 34% of Azure subscriptions. Azure offers native anomaly detection at no additional cost through Cost Management. The majority of subscriptions have never activated it.

30. Teams that configure Azure budget alerts detect anomalies 4.1x faster than those relying on invoice review. Budget alerts with appropriate thresholds create near-real-time awareness of cost deviations. The detection speed advantage is substantial.

31. Anomalies detected within 48 hours cost 73% less to remediate than those detected after 2 weeks. Time is money in both directions. Fast detection compresses the blast radius dramatically.

32. Only 19% of Azure organizations have defined a formal cost anomaly response process. Detecting an anomaly is only half the problem. Without a defined response (who investigates, who approves remediation, who communicates to stakeholders) anomalies get identified but not acted on quickly.

Industry Benchmarks: Who Gets Hit Hardest?

33. Technology and SaaS companies experience Azure cost anomalies 2.3x more frequently than traditional enterprises. High deployment velocity, microservices architectures, and frequent autoscaling make tech companies more exposed to cost volatility.

34. Financial services organizations have the longest average anomaly detection time at 24 days. Change management controls in financial services slow deployment velocity, but also slow anomaly detection, as fewer people have visibility into real-time cloud spend.

35. Healthcare organizations experience the largest average anomaly size, 58% above baseline, driven by data pipeline and storage events. Healthcare data workloads generate large, irregular data movement events tied to compliance reporting, imaging pipelines, and EHR integrations.

What high-performing organizations do differently

The statistics above describe the average Azure customer. The best-performing organizations look very different.

| Practice | Average Organization | High-Performing Organization |

| Anomaly detection enabled | 34% | 97% |

| Budget alerts configured | 31% | 94% |

| Anomaly response SLA defined | 19% | 88% |

| Weekly cost review cadence | 27% | 91% |

| Autoscale max limits set | 56% | 99% |

| Log ingestion monitored | 22% | 87% |

The gap is not technology. Every capability in the right column is available natively in Azure at no additional cost. The gap is process and ownership.

How to build an Azure cost anomaly defense

Enable Azure Cost Management anomaly detection. Navigate to Cost Management > Cost alerts > Anomaly alerts. Enable it at the subscription level and configure alert recipients. This takes under 10 minutes and costs nothing.

Set budget alerts at three thresholds. Configure alerts at 80%, 100%, and 120% of your monthly budget for every active subscription. The 120% threshold catches overruns before the invoice closes.

Cap all autoscale configurations. Every Azure Virtual Machine Scale Set and App Service autoscale rule should have a defined maximum instance count. No autoscale rule should be unbounded in production.

Monitor Log Analytics ingestion daily. Create an Azure Monitor alert on the Usage table in Log Analytics to detect ingestion spikes above your daily baseline. A 2x ingestion spike is a reliable leading indicator of a cost anomaly in progress.

Define a cost anomaly response runbook. Document: who receives alerts, who investigates, what constitutes an emergency remediation versus a planned cleanup, and who communicates spend deviations to leadership. Without this, alerts create awareness but not action.

Tag every resource with an owner. When an anomaly fires, the first question is always “whose resource is this?” Ownership tags answer that question in seconds instead of hours.

The bottom line

Azure cost anomalies are not random. They follow predictable patterns: autoscaling without limits, logging without governance, deployments without cleanup, and pipelines without safeguards. The 18-day average detection window is not a technical failure. It is a process failure.

The organizations that compress that window to hours share three traits: they have configured the free detection tools Azure provides, they have defined ownership for every resource, and they have a response process ready before the alert fires.

The statistics in this post describe what happens without those practices. The good news is that implementing them does not require budget, headcount, or a lengthy project. It requires a decision to treat cloud cost visibility as a first-class engineering concern, not a finance team problem reviewed once a month.

Sources: Flexera State of the Cloud Report 2025, FinOps Foundation Annual Survey, Gartner Cloud Cost Management Benchmarks, Azure Cost Management product telemetry disclosures, Microsoft Azure Well-Architected Framework cost optimization guidance, and aggregated enterprise Azure audit data.

INTEGRATE 2026 | The Largest Microsoft Integration Tech Conference -

INTEGRATE 2026 | The Largest Microsoft Integration Tech Conference -