Introduction

Microsoft Azure Portal provides features to scale your worker role automatically, based on a set of rules provided by the user. This article is a guide to accomplish worker role scaling.

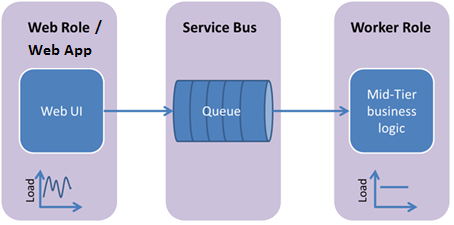

Azure Worker Role runs application- and service-level tasks in Azure. In our case, consider the worker role processing the messages in Azure queues or Service Bus queues. Based on the message, the required business logic is handled in the worker role.

Scaling Worker Role

There can be scenarios where more sources are simultaneously sending messages to a queue. In such scenarios, the worker role lags behind in processing the messages. Here, the Scaling comes in to the picture. Scaling is the process of increasing the instances of the worker role, so that the work of the single worker role is shared by the new instances.

Mapping Queue With Worker Role

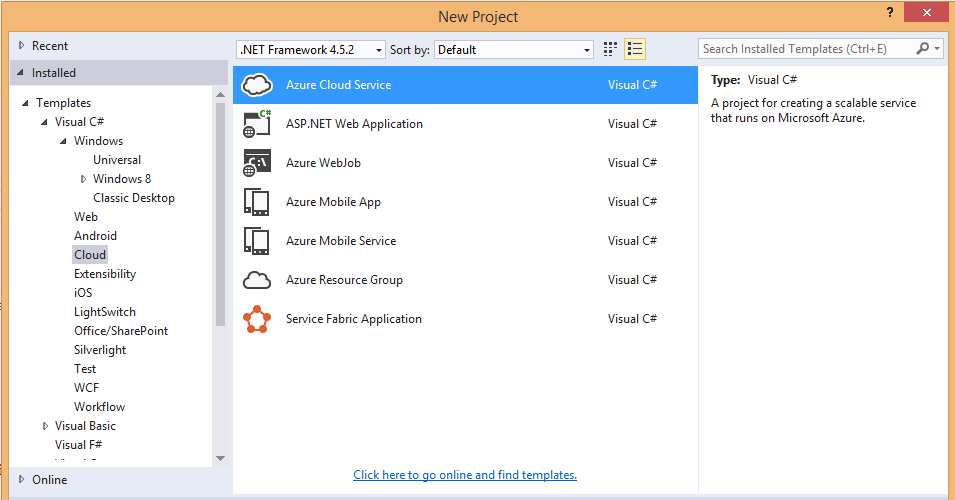

Add a new project in Visual Studio File->New->Project->Cloud->Azure Cloud Service

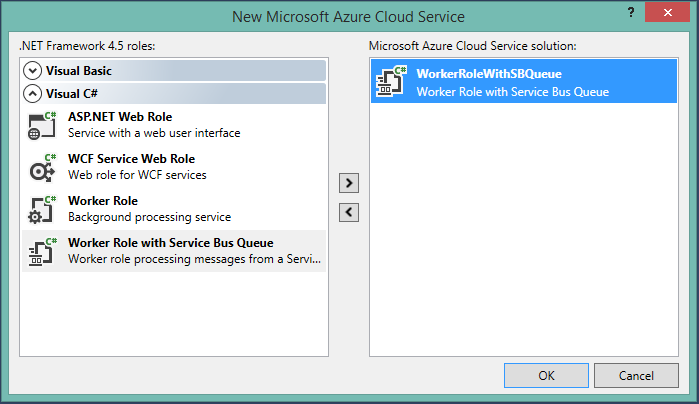

Then select the Worker Role with Service Bus Queue option, so that the worker role which is to process the messages from a queue will be created.

Inside the class WorkerRole, the name of queue will be “ProcessingQueue” as default, it can be changed to the name of the queue which contains the messages to be processed, so that the messages are received by the worker role from the specified queue.

const string QueueName = "ProcessingQueue"; // QueueClient is thread-safe. Recommended that you cache // rather than recreating it on every request QueueClient Client; ManualResetEvent CompletedEvent = new ManualResetEvent(false);

Create an instance of the NamespaceManager class with the connection string of the Service Bus Namespace and check whether the queue is available. If not, a new queue is created with the specified name. Then the client for that queue is created.

public override bool OnStart()

{

// Set the maximum number of concurrent connections

ServicePointManager.DefaultConnectionLimit = 12;

// Create the queue if it does not exist already

string connectionString = CloudConfigurationManager.GetSetting("Microsoft.ServiceBus.ConnectionString");

var namespaceManager = NamespaceManager.CreateFromConnectionString(connectionString);

if (!namespaceManager.QueueExists(QueueName))

{

namespaceManager.CreateQueue(QueueName);

}

// Initialize the connection to Service Bus Queue

Client = QueueClient.CreateFromConnectionString(connectionString, QueueName);

return base.OnStart();

}

Close the queue connection once the worker role is stopped,

public override void OnStop()

{

// Close the connection to Service Bus Queue

Client.Close();

CompletedEvent.Set();

base.OnStop();

}

Processing the messages in the queue using the queue client,

public override void Run()

{

Trace.WriteLine("Starting processing of messages");

// Initiates the message pump and callback is invoked for each message that is received, calling close on the client will stop the pump.

Client.OnMessage((receivedMessage)

{

try

{

// Process the message

Trace.WriteLine("Processing Service Bus message: " + receivedMessage.SequenceNumber.ToString());

}

catch

{

// Handle any message processing specific exceptions here

}

});

CompletedEvent.WaitOne();

}

Thus by publishing the worker role, it starts processing the messages from the specified queue. Either Azure Queue or Topic Subscription can be used for this process.

Auto Scaling Worker Role

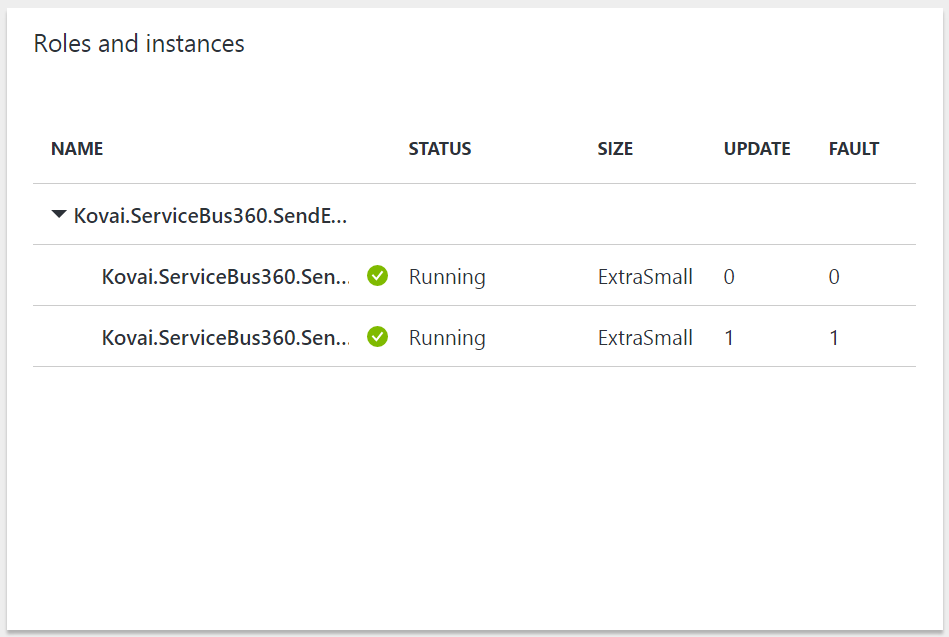

- Once the worker role is published in the new Azure Portal, search for the cloud service and select the Worker role.

- Now the blade containing the details of that particular cloud service will be opened.

- The number of instances currently running will be listed.

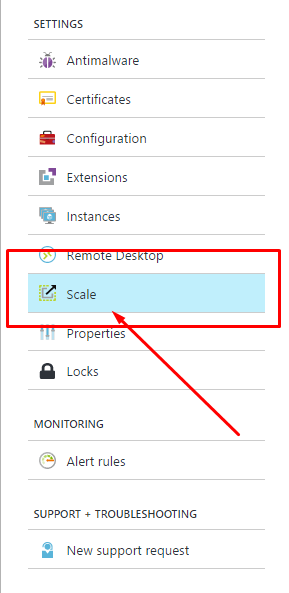

By clicking the worker role Name from the blade, the list of options available for the worker role will be listed, the scaling settings cab be done after clicking the Scale option.

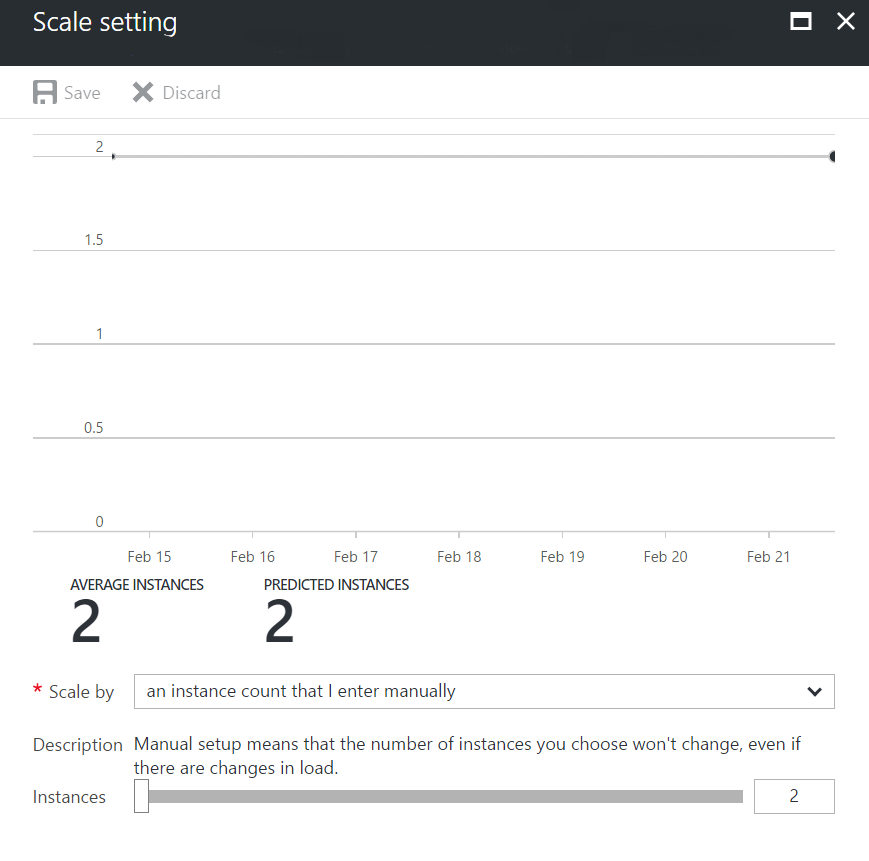

The blade containing the count and graphical representation of the current instances of worker role will be opened.

There are two types of scaling available for the cloud services:

There are two types of scaling available for the cloud services:

- “an instance count that I enter manually” (manual)

- “schedule and performance rules” (automatic)

Manual:

Applying manual scaling is straight forward. By simply providing the number of instances, we can scale up or scale down the instances of the cloud service up to the given count.

- Set the “Scale by” option to “an instance count that I enter manually”.

- Use the slider to increase or decrease the number of instance.

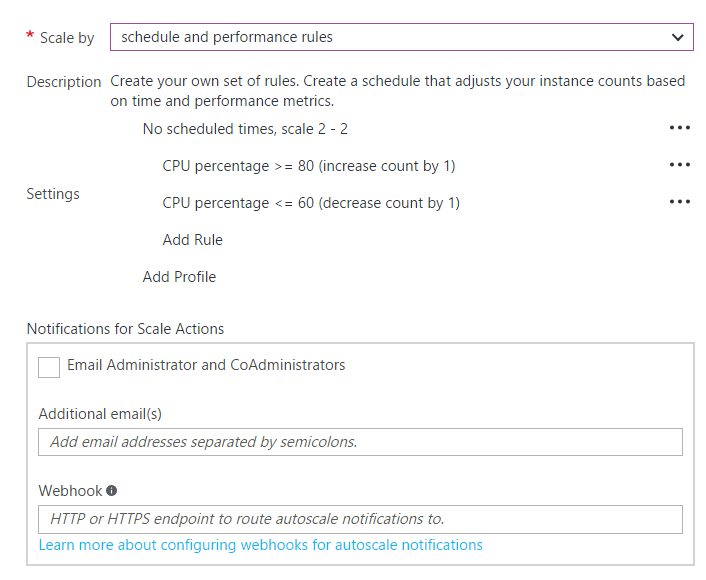

Automatic:

Automatic scaling is far better than the Manual scaling. Here we provide a set of rules, based on which the scaling process is performed automatically.

- Set the “Scale by” option to “schedule and performance rules”

Initially the default profile is available which does not contain any rules associated with it.

A new Profile or a new Rule can be created for the existing Profile.

- Add Profile:

Adding a new profile requires a name of the profile and the minimum and maximum number of instance the worker role should scale up.

In addition, user can configure the type of the scaling, such as

- Always

- Recurrence

- Fixed date

Always – By choosing always the rule will be cross checked with the status of the worker role, all the time.

Recurrence – By choosing the recurrence type, user needs to provide the day and time, for which the ruled will be cross checked and the scaling is performed.

Fixed date – Fixed date provides the option to check the status of the rules for a particular time and the auto scaling applies on that particular date.

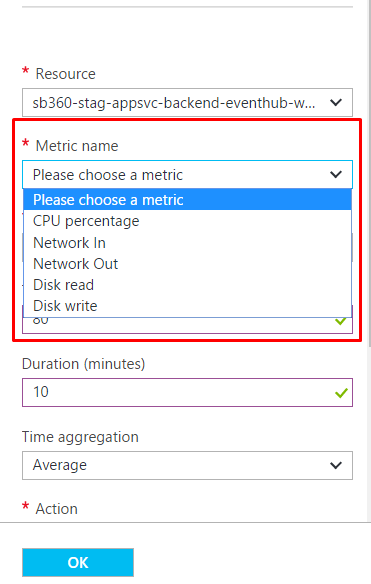

Rule – Rules are set of configurations, according to which the scaling is performed. The rules are provided based on the metrics of the worker role.

There are sufficient options to derive a condition, when and how the scaling has to be done.

Operator – represents the operator to be included in the scaling conditional expression.

Threshold – represents the maximum limit

Duration – represents how long the threshold should persist in minutes

Action – represents the action to be performed when the current status is same as the condition provided

Value – represents the value that applied on the action

Cool down – represents the time for the worker role to become normal

All these configurations, can be applied based on the worker role or the queue from which the worker role processes the message. Any other resources can also be included for setting this configuration.

- Once everything is configured, the save icon should be clicked. Now the worker role will be scaled up or scale out based on the queue and the configurations.

Conclusion

Thus, by scaling the worker role, the application can be more robust and reliable even during the load is above certain thresholds. There are options available for scaling out the worker role when there are no requirements, so auto scaling is advantageous in terms of both expense and performance.

INTEGRATE 2026 | The Largest Microsoft Integration Tech Conference -

INTEGRATE 2026 | The Largest Microsoft Integration Tech Conference -